Streaming language models

Language models that support streaming responses. See https://replicate.com/docs/streaming

Recommended models

meta/meta-llama-3-70b-instruct

A 70 billion parameter language model from Meta, fine tuned for chat completions

meta/meta-llama-3-8b

Base version of Llama 3, an 8 billion parameter language model from Meta.

meta/meta-llama-3-8b-instruct

An 8 billion parameter language model from Meta, fine tuned for chat completions

yorickvp/llava-13b

Visual instruction tuning towards large language and vision models with GPT-4 level capabilities

mistralai/mixtral-8x7b-instruct-v0.1

The Mixtral-8x7B-instruct-v0.1 Large Language Model (LLM) is a pretrained generative Sparse Mixture of Experts tuned to be a helpful assistant.

meta/llama-2-7b-chat

A 7 billion parameter language model from Meta, fine tuned for chat completions

meta/llama-2-70b-chat

A 70 billion parameter language model from Meta, fine tuned for chat completions

meta/llama-2-13b-chat

A 13 billion parameter language model from Meta, fine tuned for chat completions

mistralai/mistral-7b-instruct-v0.2

The Mistral-7B-Instruct-v0.2 Large Language Model (LLM) is an improved instruct fine-tuned version of Mistral-7B-Instruct-v0.1.

yorickvp/llava-v1.6-vicuna-13b

LLaVA v1.6: Large Language and Vision Assistant (Vicuna-13B)

fofr/prompt-classifier

Determines the toxicity of text to image prompts, llama-13b fine-tune. [SAFETY_RANKING] between 0 (safe) and 10 (toxic)

mistralai/mistral-7b-v0.1

A 7 billion parameter language model from Mistral.

yorickvp/llava-v1.6-mistral-7b

LLaVA v1.6: Large Language and Vision Assistant (Mistral-7B)

yorickvp/llava-v1.6-34b

LLaVA v1.6: Large Language and Vision Assistant (Nous-Hermes-2-34B)

replicate-internal/llama-2-70b-triton

mistralai/mistral-7b-instruct-v0.1

An instruction-tuned 7 billion parameter language model from Mistral

snowflake/snowflake-arctic-instruct

An efficient, intelligent, and truly open-source language model

meta/llama-2-7b

Base version of Llama 2 7B, a 7 billion parameter language model

replicate/dolly-v2-12b

An open source instruction-tuned large language model developed by Databricks

spuuntries/flatdolphinmaid-8x7b-gguf

Undi95's FlatDolphinMaid 8x7B Mixtral Merge, GGUF Q5_K_M quantized by TheBloke.

meta/llama-2-70b

Base version of Llama 2, a 70 billion parameter language model from Meta.

meta/meta-llama-3-70b

Base version of Llama 3, a 70 billion parameter language model from Meta.

joehoover/instructblip-vicuna13b

An instruction-tuned multi-modal model based on BLIP-2 and Vicuna-13B

01-ai/yi-34b-chat

The Yi series models are large language models trained from scratch by developers at 01.AI.

replicate/vicuna-13b

A large language model that's been fine-tuned on ChatGPT interactions

nateraw/goliath-120b

An auto-regressive causal LM created by combining 2x finetuned Llama-2 70B into one.

andreasjansson/sheep-duck-llama-2-70b-v1-1-gguf

meta/llama-2-13b

Base version of Llama 2 13B, a 13 billion parameter language model

antoinelyset/openhermes-2-mistral-7b-awq

01-ai/yi-6b

The Yi series models are large language models trained from scratch by developers at 01.AI.

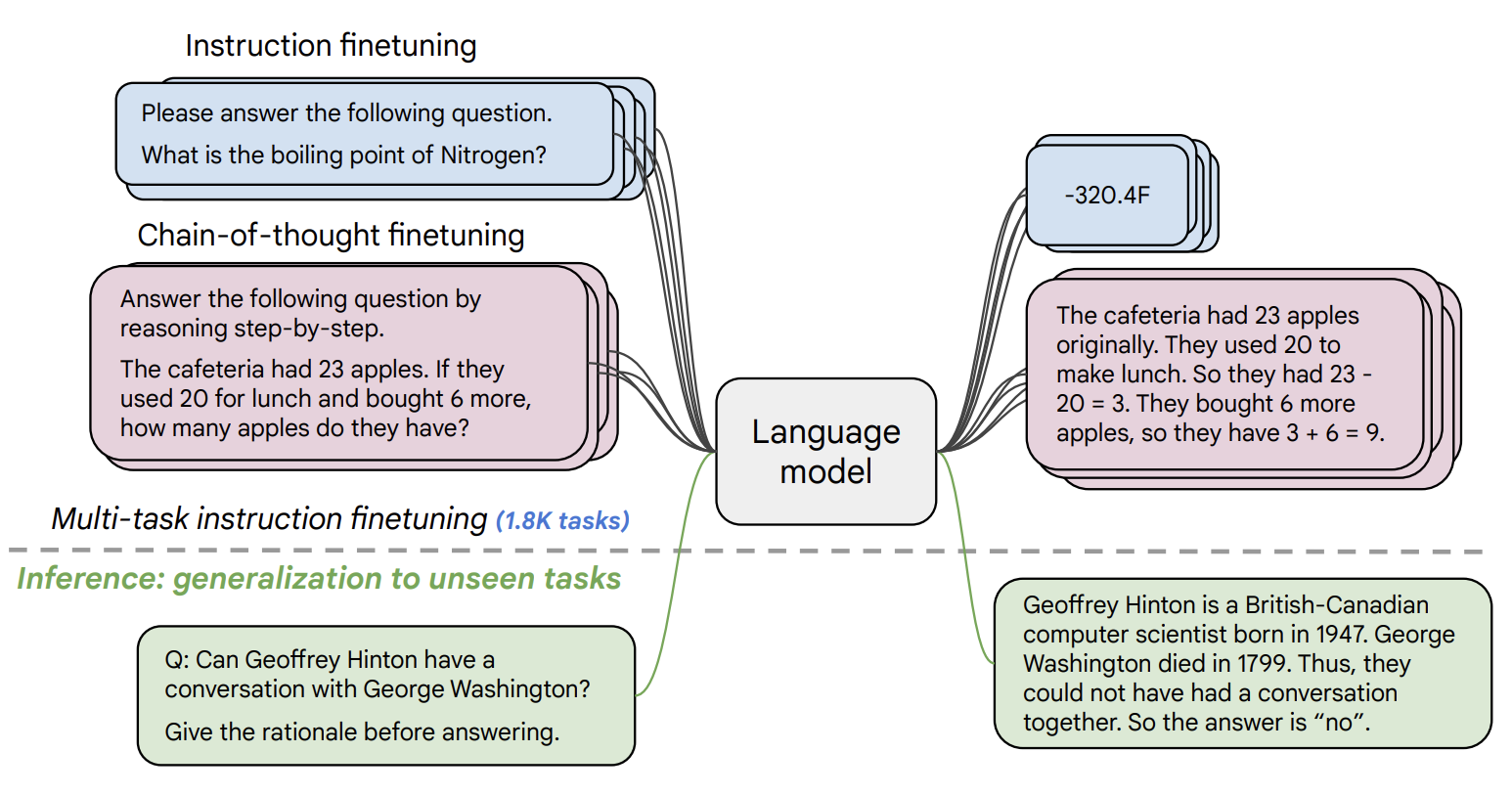

replicate/flan-t5-xl

A language model by Google for tasks like classification, summarization, and more

meta/codellama-34b-instruct

A 34 billion parameter Llama tuned for coding and conversation

meta/codellama-13b

A 13 billion parameter Llama tuned for code completion

stability-ai/stablelm-tuned-alpha-7b

7 billion parameter version of Stability AI's language model

replicate/llama-7b

Transformers implementation of the LLaMA language model

nateraw/openchat_3.5-awq

OpenChat: Advancing Open-source Language Models with Mixed-Quality Data

google-deepmind/gemma-2b-it

2B instruct version of Google’s Gemma model

lucataco/phi-3-mini-4k-instruct

Phi-3-Mini-4K-Instruct is a 3.8B parameters, lightweight, state-of-the-art open model trained with the Phi-3 datasets

lucataco/moondream2

moondream2 is a small vision language model designed to run efficiently on edge devices

nateraw/mistral-7b-openorca

Mistral-7B-v0.1 fine tuned for chat with the OpenOrca dataset.

google-deepmind/gemma-7b-it

7B instruct version of Google’s Gemma model

nateraw/nous-hermes-2-solar-10.7b

Nous Hermes 2 - SOLAR 10.7B is the flagship Nous Research model on the SOLAR 10.7B base model..

joehoover/mplug-owl

An instruction-tuned multimodal large language model that generates text based on user-provided prompts and images

fofr/image-prompts

Generate image prompts for Midjourney. Prefix inputs with "Image: "

meta/codellama-13b-instruct

A 13 billion parameter Llama tuned for coding and conversation

joehoover/falcon-40b-instruct

A 40 billion parameter language model trained to follow human instructions.

meta/codellama-7b-instruct

A 7 billion parameter Llama tuned for coding and conversation

kcaverly/dolphin-2.5-mixtral-8x7b-gguf

Mixtral-8x7b MOE model trained for chat with the dolphin dataset, quantized

yorickvp/llava-v1.6-vicuna-7b

LLaVA v1.6: Large Language and Vision Assistant (Vicuna-7B)

replicate/oasst-sft-1-pythia-12b

An open source instruction-tuned large language model developed by Open-Assistant

lucataco/dolphin-2.2.1-mistral-7b

Mistral-7B-v0.1 fine tuned for chat with the Dolphin dataset (an open-source implementation of Microsoft's Orca)

kcaverly/openchat-3.5-1210-gguf

The "Overall Best Performing Open Source 7B Model" for Coding + Generalization or Mathematical Reasoning

nateraw/defog-sqlcoder-7b-2

A capable large language model for natural language to SQL generation.

andreasjansson/codellama-7b-instruct-gguf

CodeLlama-7B-instruct with support for grammars and jsonschema

meta/codellama-70b-instruct

A 70 billion parameter Llama tuned for coding and conversation

uwulewd/airoboros-llama-2-70b

Inference Airoboros L2 70B 2.1 - GPTQ using ExLlama.

lucataco/wizardcoder-33b-v1.1-gguf

WizardCoder: Empowering Code Large Language Models with Evol-Instruct

replicate/lifeboat-70b

meta/codellama-7b

A 7 billion parameter Llama tuned for coding and conversation

nomagick/chatglm3-6b

A 6B parameter open bilingual chat LLM | 开源双语对话语言模型

spuuntries/miqumaid-v1-70b-gguf

NeverSleep's MiquMaid v1 70B Miqu Finetune, GGUF Q3_K_M quantized by NeverSleep.

gregwdata/defog-sqlcoder-q8

Defog's SQLCoder is a state-of-the-art LLM for converting natural language questions to SQL queries. SQLCoder is a 15B parameter fine-tuned on a base StarCoder model.

lucataco/dolphin-2.1-mistral-7b

Mistral-7B-v0.1 fine tuned for chat with the Dolphin dataset (an open-source implementation of Microsoft's Orca)

kcaverly/neuralbeagle14-7b-gguf

NeuralBeagle14-7B is (probably) the best 7B model you can find!

antoinelyset/openhermes-2.5-mistral-7b

nomagick/chatglm2-6b

ChatGLM2-6B: An Open Bilingual Chat LLM | 开源双语对话语言模型

lucataco/moondream1

(Research only) Moondream1 is a vision language model that performs on par with models twice its size

meta/codellama-34b

A 34 billion parameter Llama tuned for coding and conversation

kcaverly/nous-hermes-2-yi-34b-gguf

Nous Hermes 2 - Yi-34B is a state of the art Yi Fine-tune, fine tuned on GPT-4 generated synthetic data

replicate/gpt-j-6b

A large language model by EleutherAI

andreasjansson/llama-2-13b-chat-gguf

Llama-2 13B chat with support for grammars and jsonschema

replicate/mpt-7b-storywriter

A 7B parameter LLM fine-tuned to support contexts with more than 65K tokens

nateraw/nous-hermes-llama2-awq

TheBloke/Nous-Hermes-Llama2-AWQ served with vLLM

lucataco/wizard-vicuna-13b-uncensored

This is wizard-vicuna-13b trained with a subset of the dataset - responses that contained alignment / moralizing were removed

joehoover/zephyr-7b-alpha

A high-performing language model trained to act as a helpful assistant

google-deepmind/gemma-7b

7B base version of Google’s Gemma model

hikikomori-haven/solar-uncensored

meta/codellama-34b-python

A 34 billion parameter Llama tuned for coding with Python

nateraw/zephyr-7b-beta

Zephyr-7B-beta, an LLM trained to act as a helpful assistant.

replicate/llama-13b-lora

Transformers implementation of the LLaMA 13B language model

lucataco/llama-3-vision-alpha

Projection module trained to add vision capabilties to Llama 3 using SigLIP

nomagick/qwen-14b-chat

Qwen-14B-Chat is a Transformer-based large language model, which is pretrained on a large volume of data, including web texts, books, codes, etc.

kcaverly/dolphin-2.7-mixtral-8x7b-gguf

Uncensored Mixtral-8x7b MOE model trained for chat with the Dolphin dataset

lucataco/phi-3-mini-128k-instruct

Phi-3-Mini-128K-Instruct is a 3.8 billion-parameter, lightweight, state-of-the-art open model trained using the Phi-3 datasets

01-ai/yi-6b-chat

The Yi series models are large language models trained from scratch by developers at 01.AI.

cuuupid/glm-4v-9b

GLM-4V is a multimodal model released by Tsinghua University that is competitive with GPT-4o and establishes a new SOTA on several benchmarks, including OCR.

nateraw/salmonn

SALMONN: Speech Audio Language Music Open Neural Network

meta/codellama-13b-python

A 13 billion parameter Llama tuned for coding with Python

lucataco/qwen1.5-72b

Qwen1.5 is the beta version of Qwen2, a transformer-based decoder-only language model pretrained on a large amount of data

meta/codellama-7b-python

A 7 billion parameter Llama tuned for coding with Python

joehoover/sql-generator

mikeei/dolphin-2.9-llama3-70b-gguf

Dolphin is uncensored. I have filtered the dataset to remove alignment and bias. This makes the model more compliant.

anotherjesse/llava-lies

LLaVA injecting randomness into the image

kcaverly/dolphin-2.6-mixtral-8x7b-gguf

Mixtral-8x7b MOE model trained for chat with the dolphin + samantha's empathy dataset

lucataco/qwen1.5-110b

Qwen1.5 is the beta version of Qwen2, a transformer-based decoder-only language model pretrained on a large amount of data

organisciak/ocsai-llama2-7b

01-ai/yi-34b

The Yi series models are large language models trained from scratch by developers at 01.AI.

andreasjansson/llama-2-70b-chat-gguf

Llama-2 70B chat with support for grammars and jsonschema

kcaverly/deepseek-coder-33b-instruct-gguf

A quantized 33B parameter language model from Deepseek for SOTA repository level code completion

deepseek-ai/deepseek-math-7b-base

Pushing the Limits of Mathematical Reasoning in Open Language Models - Base model

replit/replit-code-v1-3b

Generate code with Replit's replit-code-v1-3b large language model

01-ai/yi-34b-200k

The Yi series models are large language models trained from scratch by developers at 01.AI.

nousresearch/hermes-2-theta-llama-8b

Hermes-2 Θ (Theta) is the first experimental merged model released by Nous Research, in collaboration with Charles Goddard at Arcee, the team behind MergeKit.

lucataco/deepseek-vl-7b-base

DeepSeek-VL: An open-source Vision-Language Model designed for real-world vision and language understanding applications

mattt/orca-2-13b

niron1/openorca-platypus2-13b

OpenOrca-Platypus2-13B is a merge of garage-bAInd/Platypus2-13B and Open-Orca/OpenOrcaxOpenChat-Preview2-13B.

camenduru/wizardlm-2-8x22b

WizardLM 2 8x22B

deepseek-ai/deepseek-math-7b-instruct

Pushing the Limits of Mathematical Reasoning in Open Language Models - Instruct model

daanelson/flan-t5-large

A language model for tasks like classification, summarization, and more.

meta/codellama-70b

A 70 billion parameter Llama tuned for coding and conversation

anotherjesse/sdxl-recur

explore img2img zooming sdxl

hayooucom/vision-model

This is phi-3-vision model , cost by time ,have fun~

meta/codellama-70b-python

A 70 billion parameter Llama tuned for coding with Python

niron1/qwen-7b-chat

Qwen-7B is the 7B-parameter version of the large language model series, Qwen (abbr. Tongyi Qianwen), proposed by Aibaba Cloud. Qwen-7B`is a Transformer-based large language model, which is pretrained on a large volume of data, including web texts, books,

nateraw/causallm-14b

CausalLM/14B model with AWQ quantization. Perhaps better than all existing models < 70B, in most quantitative evaluations...

kcaverly/nous-capybara-34b-gguf

A SOTA Nous Research finetune of 200k Yi-34B fine tuned on the Capybara dataset.

nateraw/samsum-llama-2-13b

spuuntries/miqumaid-v2-2x70b-dpo-gguf

NeverSleep's MiquMaid v2 2x70B Miqu-Mixtral MoE DPO Finetune, GGUF Q2_K quantized by NeverSleep.

hamelsmu/llama-3-70b-instruct-awq-with-tools

Function calling with llama-3 with prompting only.

nomagick/qwen-vl-chat

Qwen-VL-Chat but with raw ChatML prompt interface and streaming

andreasjansson/codellama-34b-instruct-gguf

CodeLlama-34B-instruct with support for grammars and jsonschema

nwhitehead/llama2-7b-chat-gptq

andreasjansson/wizardcoder-python-34b-v1-gguf

WizardCoder-python-34B-v1.0 with support for grammars and jsonschema

andreasjansson/llama-2-13b-gguf

Llama-2 13B with support for grammars and jsonschema

moinnadeem/vllm-engine-llama-7b

charles-dyfis-net/llama-2-13b-hf--lmtp-8bit

ruben-svensson/llama2-aqua-test1

google-deepmind/gemma-2b

2B base version of Google’s Gemma model

antoinelyset/openhermes-2.5-mistral-7b-awq

papermoose/llama-pajama

stability-ai/stablelm-base-alpha-7b

7B parameter base version of Stability AI's language model

fofr/llama2-prompter

Llama2 13b base model fine-tuned on text to image prompts

kcaverly/deepseek-coder-6.7b-instruct

A ~7B parameter language model from Deepseek for SOTA repository level code completion

mikeei/dolphin-2.9-llama3-8b-gguf

Dolphin is uncensored. I have filtered the dataset to remove alignment and bias. This makes the model more compliant.

spuuntries/erosumika-7b-v3-0.2-gguf

localfultonextractor's Erosumika 7B Mistral Merge, GGUF Q4_K_S-imat quantized by Lewdiculous.

lucataco/gemma2-27b-it

Google's Gemma2 27b instruct model

fofr/star-trek-gpt-j-6b

gpt-j-6b trained on the Memory Alpha Star Trek Wiki

replicate-internal/staging-llama-2-7b

stability-ai/stablelm-base-alpha-3b

3B parameter base version of Stability AI's language model

andreasjansson/plasma

Generate plasma shader equations

nateraw/sqlcoder-70b-alpha

camenduru/mixtral-8x22b-instruct-v0.1

Mixtral 8x22b Instruct v0.1

lucataco/hermes-2-pro-llama-3-8b

Hermes 2 Pro is an updated and cleaned version of the OpenHermes 2.5 Dataset, as well as a newly introduced Function Calling and JSON Mode dataset developed in-house

theghoul21/srl

kcaverly/dolphin-2.6-mistral-7b-gguf

Mistral 7b v2 Fine Tuned on the Dolphin dataset

lucataco/tinyllama-1.1b-chat-v1.0

This is the chat model finetuned on top of TinyLlama/TinyLlama-1.1B-intermediate-step-1431k-3T

nomagick/chatglm3-6b-32k

A 6B parameter open bilingual chat LLM (optimized for 8k+ context) | 开源双语对话语言模型

nateraw/axolotl-llama-2-7b-english-to-hinglish

ignaciosgithub/pllava

lucataco/qwen1.5-14b

Qwen1.5 is the beta version of Qwen2, a transformer-based decoder-only language model pretrained on a large amount of data

peter65374/openbuddy-llemma-34b-gguf

This is a cog implementation of "openbuddy-llemma-34b" 4-bit quantization model.

niron1/llama-2-7b-chat

LLAMA-2 7b chat version by Meta. Stream support. Unaltered prompt. Temperature working properly. Economical hardware.

cbh123/dylan-lyrics

Llama 2 13B fine-tuned on Bob Dylan lyrics

antoinelyset/openhermes-2-mistral-7b

Simple version of https://huggingface.co/teknium/OpenHermes-2-Mistral-7B

camenduru/mixtral-8x22b-v0.1-instruct-oh

Mixtral-8x22b-v0.1-Instruct-Open-Hermes

kcaverly/phind-codellama-34b-v2-gguf

A quantized 34B parameter language model from Phind for code completion

adirik/mamba-2.8b

Base version of Mamba 2.8B, a 2.8 billion parameter state space language model

hayooucom/llm-60k

llm model ,for CN

nomagick/chatglm2-6b-int4

ChatGLM2-6B: An Open Bilingual Chat LLM | 开源双语对话语言模型 (int4)

zeke/nyu-llama-2-7b-chat-training-test

A test model for fine-tuning Llama 2

lucataco/phixtral-2x2_8

phixtral-2x2_8 is the first Mixure of Experts (MoE) made with two microsoft/phi-2 models, inspired by the mistralai/Mixtral-8x7B-v0.1 architecture

kcaverly/nous-hermes-2-solar-10.7b-gguf

Nous Hermes 2 - SOLAR 10.7B is the flagship Nous Research model on the SOLAR 10.7B base model.

hamelsmu/honeycomb-2

Honeycomb NLQ Generator

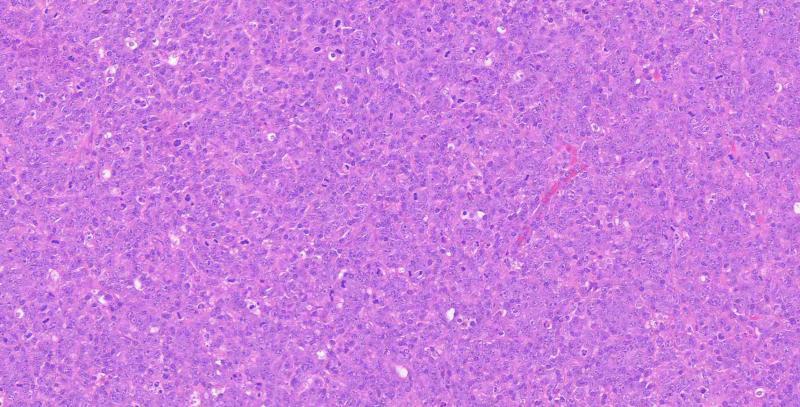

xrunda/med

kcaverly/nexus-raven-v2-13b-gguf

A quantized 13B parameter language model from NexusFlow for SOTA zero-shot function calling

zallesov/super-real-llama2

mikeei/dolphin-2.9.1-llama3-8b-gguf

Dolphin is uncensored. I have filtered the dataset to remove alignment and bias. This makes the model more compliant.

fofr/star-trek-adventure

nateraw/stablecode-completion-alpha-3b-4k

fofr/neuromancer-13b

llama-13b-base fine-tuned on Neuromancer style

swartype/lanne-m1-70b

Lanne M1 is the first language model produced by Lanne Tech. It is based on +70B of parameters. With performance equivalent to GPT3.5.

m1guelpf/mario-gpt

Using language models to generate Super Mario Bros levels

camenduru/zephyr-orpo-141b-a35b-v0.1

Mixtral 8x22b v0.1 Zephyr Orpo 141b A35b v0.1

nateraw/samsum-llama-7b

llama-2-7b fine-tuned on the samsum dataset for dialogue summarization

fofr/star-trek-flan

flan-t5-xl trained on the Memory Alpha Star Trek Wiki

zsxkib/qwen2-0.5b-instruct

Qwen 2: A 0.5 billion parameter language model from Alibaba Cloud, fine tuned for chat completions

fofr/star-trek-llama

llama-7b trained on the Memory Alpha Star Trek Wiki

cuuupid/minicpm-llama3-v-2.5

MiniCPM LLama3-V 2.5, a new SOTA open-source VLM that surpasses GPT-4V-1106 and Phi-128k on a number of benchmarks.

titocosta/notus-7b-v1

Notus-7b-v1 model

moinnadeem/fastervicuna_13b

Re-implements LLaMa using a higher MFU implementation

nateraw/llama-2-7b-paraphrase-v1

lucataco/qwen1.5-7b

Qwen1.5 is the beta version of Qwen2, a transformer-based decoder-only language model pretrained on a large amount of data

cbh123/samsum

adirik/mamba-130m

Base version of Mamba 130M, a 130 million parameter state space language model

cjwbw/opencodeinterpreter-ds-6.7b

OpenCodeInterpreter: Integrating Code Generation with Execution and Refinement

rybens92/una-cybertron-7b-v2--lmtp-8bit

nateraw/wizardcoder-python-34b-v1.0

automorphic-ai/runhouse

cjwbw/starcoder2-15b

Language Models for Code

crowdy/line-lang-3.6b

an implementation of 3.6b Japanese large language model

lucataco/hermes-2-pro-llama-3-70b

Hermes 2 Pro is an updated and cleaned version of the OpenHermes 2.5 Dataset, as well as a newly introduced Function Calling and JSON Mode dataset developed in-house

nateraw/llama-2-7b-chat-hf

nateraw/aidc-ai-business-marcoroni-13b

moinnadeem/codellama-34b-instruct-vllm

tanzir11/merge

lucataco/olmo-7b

OLMo is a series of Open Language Models designed to enable the science of language models

lucataco/qwen1.5-1.8b

Qwen1.5 is the beta version of Qwen2, a transformer-based decoder-only language model pretrained on a large amount of data

spuuntries/borealis-10.7b-dpo-gguf

Undi95's Borealis 10.7B Mistral DPO Finetune, GGUF Q5_K_M quantized by Undi95.

nateraw/codellama-7b-instruct-hf

zsxkib/qwen2-1.5b-instruct

Qwen 2: A 1.5 billion parameter language model from Alibaba Cloud, fine tuned for chat completions

lucataco/hermes-2-theta-llama-3-70b

Hermes-2 Θ (Theta) 70B is the continuation of our experimental merged model released by Nous Research

juanjaragavi/abby-llama-2-7b-chat

Abby is a stoic philosopher and a loving and caring mature woman.

peter65374/openbuddy-mistral-7b

Openbuddy finetuned mistral-7b in GPTQ quantization in 4bits by TheBloke

lidarbtc/kollava-v1.5

korean version of llava-v1.5

replicate-internal/mixtral-8x7b-instruct-v0.1-pget

lucataco/nous-hermes-2-mixtral-8x7b-dpo

Nous Hermes 2 Mixtral 8x7B DPO is a Nous Research model trained over the Mixtral 8x7B MoE LLM

martintmv-git/moondream2

small vision language model

lucataco/qwen1.5-0.5b

Qwen1.5 is the beta version of Qwen2, a transformer-based decoder-only language model pretrained on a large amount of data

chigozienri/llava-birds

lucataco/yi-1.5-6b

Yi-1.5 is continuously pre-trained on Yi with a high-quality corpus of 500B tokens and fine-tuned on 3M diverse fine-tuning samples

cjwbw/c4ai-command-r-v01

CohereForAI c4ai-command-r-v01, Quantized model through bitsandbytes, 8-bit precision

dsingal0/mixtral-single-gpu

Runs Mixtral 8x7B on a single A40 GPU

cbh123/homerbot

lucataco/gemma2-9b-it

Google's Gemma2 9b instruct model

replicate-internal/staging-honeycomb-triton

A fast version of replicate.com/hamelsmu/honeycomb-2 using TRT-LLM

titocosta/starling

Starling-LM-7B-alpha

replicate/elixir-gen

Fine-tuned Llama 13b on Elixir docstrings (WIP)

technillogue/mixtral-instruct-nix

adirik/mamba-1.4b

Base version of Mamba 1.4B, a 1.4 billion parameter state space language model

adirik/mamba-2.8b-slimpj

Base version of Mamba 2.8B Slim Pyjama, a 2.8 billion parameter state space language model

lucataco/deepseek-67b-base

DeepSeek LLM, an advanced language model comprising 67 billion parameters. Trained from scratch on a vast dataset of 2 trillion tokens in both English and Chinese

nateraw/llama-2-7b-samsum

hamelsmu/honeycomb

Honeycomb NLQ Generator

sruthiselvaraj/finetuned-llama2

adirik/mamba-370m

Base version of Mamba 370M, a 370 million parameter state space language model

nateraw/gairmath-abel-7b

lucataco/qwen1.5-32b

Qwen1.5 is the beta version of Qwen2, a transformer-based decoder-only language model pretrained on a large amount of data

adirik/mamba-790m

Base version of Mamba 790M, a 790 million parameter state space language model

seanoliver/bob-dylan-fun-tuning

Llama fine-tune-athon project training llama2 on bob dylan lyrics.

lorenzomarines/nucleum-theta-240

A powerful LLM competitive with Claude Sonnet and GPT 3.5 but fully opensource and Decentralized

lorenzomarines/nucleum-apollonio-14b

intentface/poro-34b-gguf-checkpoint

Try out akx/Poro-34B-gguf, Q5_K, This is 1000B checkpoint model

zsxkib/qwen2-7b-instruct

Qwen 2: A 7 billion parameter language model from Alibaba Cloud, fine tuned for chat completions

fleshgordo/orni2-chat

nateraw/codellama-7b-instruct

lucataco/qwen1.5-4b

Qwen1.5 is the beta version of Qwen2, a transformer-based decoder-only language model pretrained on a large amount of data

mattt/whisper-tiny-en

charles-dyfis-net/llama-2-7b-hf--lmtp-4bit

nateraw/codellama-34b

johnnyoshika/llama2-combine-numbers

divyavanmahajan/my-pet-llama

juanjaragavi/abbot-llama-2-7b-chat

Abbot is brutally honest stoic philosopher. He is here to help the 'User' be their best self, no coddling.

msamogh/iiu-generator-llama2-7b-2

jquintanilla4/qwen1.5-32b-chat

Qwen1.5 32B Chat variant. A transformer-based decoder-only language model. Good with Chinese and English.

nateraw/codellama-7b

mattt/whisper-large-streaming

charles-dyfis-net/llama-2-13b-hf--lmtp

replicate-internal/gemma-2b-it

2B instruct version of the Gemma model

halevi/sandbox1

lucataco/hermes-2-theta-llama-3-8b

Hermes-2 Θ (Theta) is the first experimental merged model released by Nous Research

hayooucom/vision-llama3

for test

lucataco/qwen2-57b-a14b-instruct

Qwen2 57 billion parameter language model from Alibaba Cloud, fine tuned for chat completions

nateraw/codellama-13b

demonpore-sys/llamaxine0.1

lucataco/dolphin-2.9-llama3-8b

Dolphin-2.9 has a variety of instruction, conversational, and coding skills. It also has initial agentic abilities and supports function calling

charles-dyfis-net/llama-2-13b-hf--lmtp-4bit

nateraw/codellama-13b-instruct

replicate-internal/hermes-2-theta-l3-8b-fp16-triton