Readme

Model Details

MAEST is a family of Transformer models based on PASST and focused on music analysis applications. The MAEST models are also available for inference in the Essentia library and for inference and training in the official repository.

Model Description

- Developed by: Pablo Alonso

- Shared by: Pablo Alonso

- Model type: Transformer

- License: cc-by-nc-sa-4.0

- Finetuned from model: PaSST

Model Sources

- Repository: MAEST

- Paper: Efficient Supervised Training of Audio Transformers for Music Representation Learning

Uses

MAEST is a music audio representation model pre-trained on the task of music style classification. According to the evaluation reported in the original paper, it reports good performance in several downstream music analysis tasks.

Direct Use

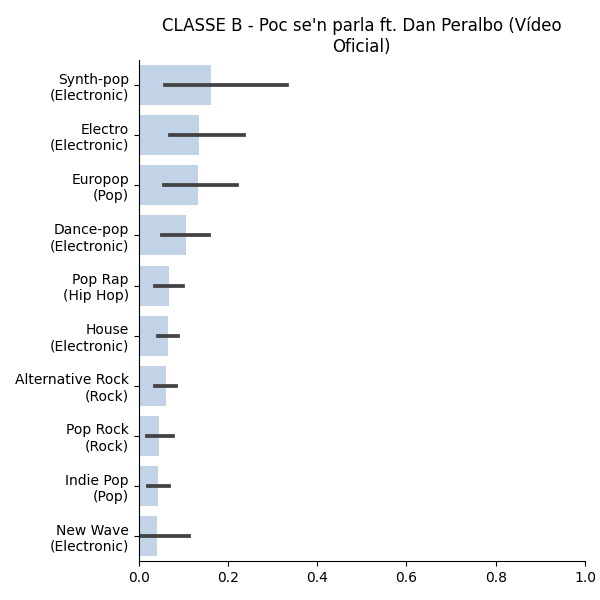

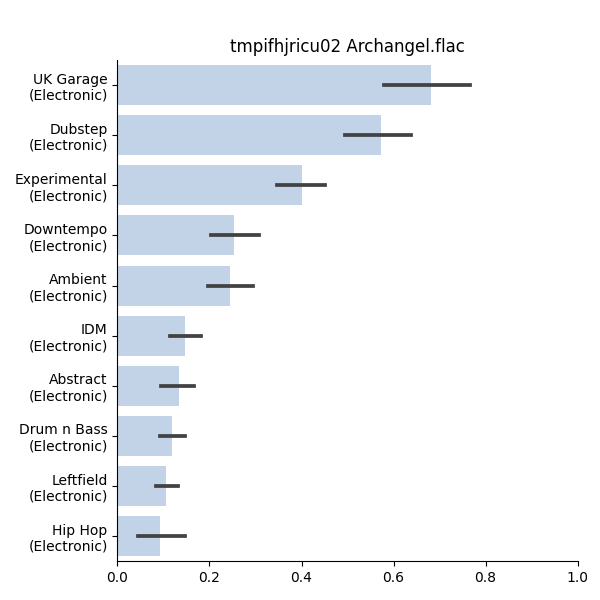

The MAEST models can make predictions for a taxonomy of 400 music styles derived from the public metadata of Discogs.

Downstream Use

The MAEST models have reported good performance in downstream applications related to music genre recognition, music emotion recognition, and instrument detection. Specifically, the original paper reports that the best performance is obtained from representations extracted from intermediate layers of the model.

Out-of-Scope Use

The model has not been evaluated outside the context of music understanding applications, so we are unaware of its performance outside its intended domain.

Since the model is intended to be used within the audio-classification pipeline, it is important to mention that MAEST is NOT a general-purpose audio classification model (such as AST), so it shuold not be expected to perform well in tasks such as AudioSet.

Bias, Risks, and Limitations

The MAEST models were trained using Discogs20, an in-house MTG dataset derived from the public Discogs metadata. While we tried to maximize the diversity with respect to the 400 music styles covered in the dataset, we noted an overrepresentation of Western (particularly electronic) music.

Training Details

Training Data

Our models were trained using Discogs20, MTG in-house dataset featuring 3.3M music tracks matched to Discogs’ metadata.

Training Procedure

Most training details are detailed in the paper and official implementation of the model.

Preprocessing

MAEST models rely on mel-spectrograms originally extracted with the Essentia library, and used in several previous publications.

In Transformers, this mel-spectrogram signature is replicated to a certain extent using audio_utils, which have a very small (but not neglectable) impact on the predictions.

Evaluation, Metrics, and results

The MAEST models were pre-trained in the task of music style classification, and their internal representations were evaluated via downstream MLP probes in several benchmark music understanding tasks. Check the original paper for details.

Environmental Impact

- Hardware Type: 4 x Nvidia RTX 2080 Ti

- Hours used: apprx. 32

- Carbon Emitted: apprx. 3.46 kg CO2 eq.

Carbon emissions estimated using the Machine Learning Impact calculator presented in Lacoste et al. (2019).

Technical Specifications

Model Architecture and Objective

Audio Spectrogram Transformer (AST)

Compute Infrastructure

Local infrastructure

Hardware

4 x Nvidia RTX 2080 Ti

Software

Pytorch

Citation

BibTeX:

@inproceedings{alonso2023music,

title={Efficient supervised training of audio transformers for music representation learning},

author={Alonso-Jim{\'e}nez, Pablo and Serra, Xavier and Bogdanov, Dmitry},

booktitle={Proceedings of the 24th International Society for Music Information Retrieval Conference (ISMIR 2023)},

year={2022},

organization={International Society for Music Information Retrieval (ISMIR)}

}

APA:

Alonso-Jiménez, P., Serra, X., & Bogdanov, D. (2023). Efficient Supervised Training of Audio Transformers for Music Representation Learning. In Proceedings of the 24th International Society for Music Information Retrieval Conference (ISMIR 2023)

Model Card Authors

Pablo Alonso

Model Card Contact

-

Twitter: @pablo__alonso

-

Github: @palonso

-

mail: pablo

dotalonsoatupfdotedu