Readme

Model by Lyumin Zhang

Usage

Input an image, and prompt the model to generate an image as you would for Stable Diffusion. Specify the type of structure you want to condition on.

Model description

This model is ControlNet adapting Stable Diffusion to generate images that have the same structure as an input image of your choosing, using:

-

Canny edge detection. The model is trained on data from a canny edge detector with random thresholds.

-

Depth maps. The model is trained on data from MiDaS.

-

Hough/MLSD line detection. The model is trained on data from a learning-based deep Hough transform that detects straight lines.

-

Normal maps. The model is trained on data with accurate, dense, far-range depth measurements.

-

Pose detection. The model is trained on data that uses a learning-based pose estimation method to “find” humans from internet.

-

Scribble. The model is trained on data synthesized from human scribbles from images using a combination of HED boundary detection and a set of strong data augmentations.

-

Semantic segmentation. The model is trained on data from a segmentation model that segments the input image into different semantic regions, and then use those regions as conditioning input when generating a new image.

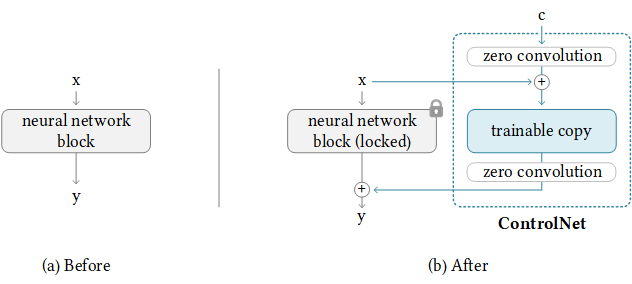

ControlNet

ControlNet is a neural network structure which allows control of pretrained large diffusion models to support additional input conditions beyond prompts. The ControlNet learns task-specific conditions in an end-to-end way, and the learning is robust even when the training dataset is small (< 50k samples). Moreover, training a ControlNet is as fast as fine-tuning a diffusion model, and the model can be trained on a personal device. Alternatively, if powerful computation clusters are available, the model can scale to large amounts of training data (millions to billions of rows). Large diffusion models like Stable Diffusion can be augmented with ControlNets to enable conditional inputs like edge maps, segmentation maps, keypoints, etc.

Original model & code on GitHub

Citation

@misc{https://doi.org/10.48550/arxiv.2302.05543,

doi = {10.48550/ARXIV.2302.05543},

url = {https://arxiv.org/abs/2302.05543},

author = {Zhang, Lvmin and Agrawala, Maneesh},

keywords = {Computer Vision and Pattern Recognition (cs.CV), Artificial Intelligence (cs.AI), Graphics (cs.GR), Human-Computer Interaction (cs.HC), Multimedia (cs.MM), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Adding Conditional Control to Text-to-Image Diffusion Models},

publisher = {arXiv},

year = {2023},

copyright = {arXiv.org perpetual, non-exclusive license}

}