Explore

Fine-tune FLUX fast

Customize FLUX.1 [dev] with the fast FLUX trainer on Replicate

Train the model to recognize and generate new concepts using a small set of example images, for specific styles, characters, or objects. It's fast (under 2 minutes), cheap (under $2), and gives you a warm, runnable model plus LoRA weights to download.

Featured models

bytedance / seedream-3

A text-to-image model with support for native high-resolution (2K) image generation

bytedance / seedance-1-pro

A pro version of Seedance that offers text-to-video and image-to-video support for 5s or 10s videos, at 480p and 1080p resolution

bytedance / seedance-1-lite

A video generation model that offers text-to-video and image-to-video support for 5s or 10s videos, at 480p and 720p resolution

kwaivgi / kling-v2.1

Use Kling v2.1 to generate 5s and 10s videos in 720p and 1080p resolution from a starting image (image-to-video)

google / veo-3

Sound on: Google’s flagship Veo 3 text to video model, with audio

google / imagen-4-ultra

Use this ultra version of Imagen 4 when quality matters more than speed and cost

replicate / fast-flux-trainer

Train subjects or styles faster than ever

black-forest-labs / flux-kontext-pro

A state-of-the-art text-based image editing model that delivers high-quality outputs with excellent prompt following and consistent results for transforming images through natural language

black-forest-labs / flux-kontext-max

A premium text-based image editing model that delivers maximum performance and improved typography generation for transforming images through natural language prompts

Official models

Official models are always on, maintained, and have predictable pricing.

I want to…

Generate images

Models that generate images from text prompts

Generate videos

Models that create and edit videos

Edit images

Tools for editing images.

Upscale images

Upscaling models that create high-quality images from low-quality images

Generate speech

Convert text to speech

Transcribe speech

Models that convert speech to text

Use LLMs

Models that can understand and generate text

Caption videos

Models that generate text from videos

Make 3D stuff

Models that generate 3D objects, scenes, radiance fields, textures and multi-views.

Restore images

Models that improve or restore images by deblurring, colorization, and removing noise

Generate music

Models to generate and modify music

Caption images

Models that generate text from images

Make videos with Wan2.1

Generate videos with Wan2.1, the fastest and highest quality open-source video generation model.

Use handy tools

Toolbelt-type models for videos and images.

Control image generation

Guide image generation with more than just text. Use edge detection, depth maps, and sketches to get the results you want.

Extract text from images

Optical character recognition (OCR) and text extraction

Chat with images

Ask language models about images

Sing with voices

Voice-to-voice cloning and musical prosody

Get embeddings

Models that generate embeddings from inputs

Use a face to make images

Make realistic images of people instantly

Remove backgrounds

Models that remove backgrounds from images and videos

Try for free

Get started with these models without adding a credit card. Whether you're making videos, generating images, or upscaling photos, these are great starting points.

Use the FLUX family of models

The FLUX family of text-to-image models from Black Forest Labs

Use official models

Official models are always on, maintained, and have predictable pricing.

Enhance videos

Models that enhance videos with super-resolution, sound effects, motion capture and other useful production effects.

Detect objects

Models that detect or segment objects in images and videos.

Use FLUX fine-tunes

Browse the diverse range of fine-tunes the community has custom-trained on Replicate

Popular models

SDXL-Lightning by ByteDance: a fast text-to-image model that makes high-quality images in 4 steps

This is the fastest Flux Dev endpoint in the world, contact us for more at pruna.ai

Practical face restoration algorithm for *old photos* or *AI-generated faces*

Return CLIP features for the clip-vit-large-patch14 model

multilingual-e5-large: A multi-language text embedding model

whisper-large-v3, incredibly fast, powered by Hugging Face Transformers! 🤗

Latest models

DeepSeek-V3-0324 is the leading non-reasoning model, a milestone for open source

Best-in-class clothing virtual try on in the wild (non-commercial use only)

Recraft V3 (code-named red_panda) is a text-to-image model with the ability to generate long texts, and images in a wide list of styles. As of today, it is SOTA in image generation, proven by the Text-to-Image Benchmark by Artificial Analysis

Recraft V3 SVG (code-named red_panda) is a text-to-image model with the ability to generate high quality SVG images including logotypes, and icons. The model supports a wide list of styles.

Generates MagicaVoxel VOX models, using flux dev + hunyuan3d-2. Can generate high detail and low detail models at varying resolutions.

epicrealism-naturalsinfinal-SD1.5-by-epinikion + perfectdeliberate by Desync + More Details by Lykon

Extract the first or last frame from any video file as a high-quality image

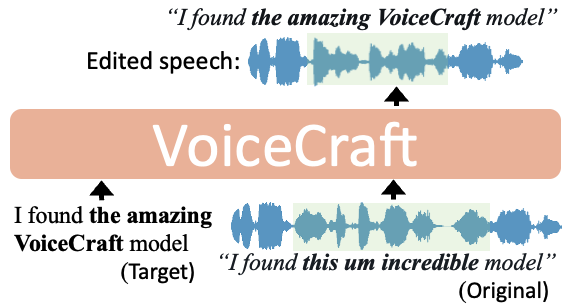

CSM (Conversational Speech Model) is a speech generation model from Sesame that generates RVQ audio codes from text and audio inputs

Sa2VA: Marrying SAM2 with LLaVA for Dense Grounded Understanding of Images and Videos

CosyVoice2-0.5B-Scalable Streaming Speech Synthesis with Large Language Models

A model Flux.1-dev-Controlnet-Upscaler by www.androcoders.in

Hunyuan3D-2mv is finetuned from Hunyuan3D-2 to support multiview controlled shape generation.

ShieldGemma 2 is a model trained on Gemma 3's 4B IT checkpoint for image safety classification across key categories that takes in images and outputs safety labels per policy.

Fast, efficient image variation model for rapid iteration and experimentation.

Open-weight image variation model. Create new versions while preserving key elements of your original.

SDXL-Lightning by ByteDance: a fast text-to-image model that makes high-quality images in 4 steps

Wan2.1 14B 480p LoRA inference via Diffusers (Work in progress)

Open-weight depth-aware image generation. Edit images while preserving spatial relationships.

Open-weight edge-guided image generation. Control structure and composition using Canny edge detection.